group-telegram.com/neural_cell/271

Last Update:

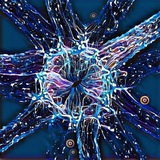

Review | Smart stimulation patterns for visual prostheses

tl;dr: Differentiable PyTorch simulator translating V1 stimulation to phosphene perception for end-to-end optimization

- Fully differentiable pipeline allowing optimization of all stimulation parameters via backpropagation

- Based on many experimental data.

- Bridges gap between electrode-level stimulation and resulting visual perception

link: https://doi.org/10.7554/eLife.85812

tl;dr: Neural encoder + Preference bayesian optimization.

- Train deep stimulus encoder (DSE): transform images -> stimulation.

- Add "patient params" 13 values as additional input into DSE.

- Uses Preferential Bayesian Optimization with GP prior to update only "patients" params using only binary comparisons

- Achieves 80% preference alignment after only 150 comparisons despite 20% simulated noise in human feedback

link: https://arxiv.org/abs/2306.13104

tl;dr: ML system that predicts and optimizes multi-electrode stimulation to achieve specific neural activity patterns

- Utah array on monkey PFC

- One-two electrode stimulation with fixed frequency/amplitude

- Collect paired (stim, signals) data across multiple sessions

- Extract latent features using Factor Analysis (FA)

- Align latent spaces across sessions using Procrustes method

- Train CNN to predict latent states from stim patterns

- Apply epsilon-greedy optimizer to find optimal stimulation in closed-loop

link: https://www.nature.com/articles/s41467-023-42338-8

tl;dr: Temporal dithering algorithm exploits neural integration window to enhance visual prosthesis performance by 40%

- Uses triphasic pulses at 0.1ms intervals optimized within neural integration time window (10-20ms)

- Implements spatial multiplexing with 200μm exclusion zones to prevent electrode interference

- Achieves 87% specificity in targeting ON vs OFF retinal pathways, solving a fundamental limitation of current implants

link: https://doi.org/10.7554/eLife.83424

my thoughts

The field is finally moving beyond simplistic zap-and-see approaches. These papers tackle predicting perception, minimizing patient burden, targeting neural states, and improving power efficiency. What excites me most is how these methods could work together - imagine MiSO's targeting combined with human feedback and efficient stimulation patterns. The missing piece? Understanding how neural activity translates to actual perception. Current approaches optimize for either brain patterns OR what people see, not both. I think the next breakthrough will come from models that bridge this gap, perhaps using contrastive learning to connect brain recordings with what people actually report seeing.