group-telegram.com/machinelearning_interview/1863

Last Update:

MiniMax-M1 — первая в мире open-weight гибридная reasoning‑LLM c 1M контекстом (8× DeepSeek R1) и гибридной архитектурой MoE + lightning attention.

• 456 млрд параметров (45,9 млрд активируются на токен), сверхэффективная генерация — 25% FLOPs DeepSeek R1 на 100K токенов

• Обучение через RL с новым алгоритмом CISPO, решающим реальные задачи от математики до кодинга

• На обучение было потрачено $534K, две версии — 40K/80K “thinking budget”

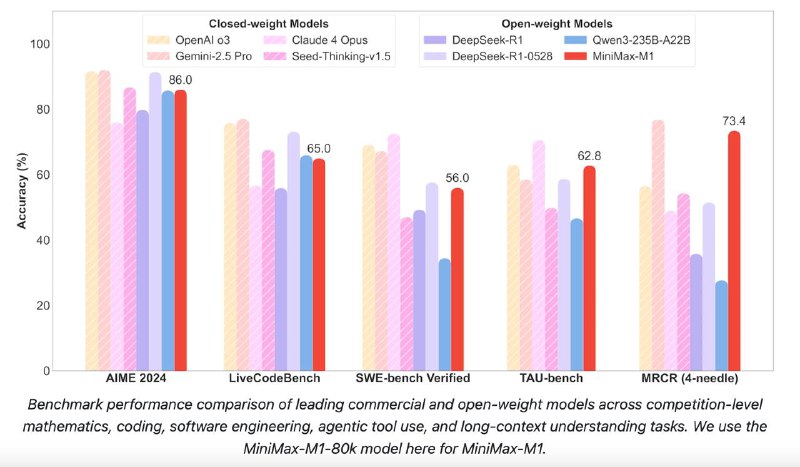

• Обходит DeepSeek R1 и Qwen3-235B на бенчмарках по математике и кодингу,

• Топ результат на задачах для software engineering и reasoning

Бенчмарки:AIME 2024: 86.0 (M1-80K) vs 85.7 (Qwen3) vs 79.8 (DeepSeek R1)

SWE-bench Verified: 56.0 vs 34.4 (Qwen3)

OpenAI-MRCR (128k): 73.4 vs 27.7 (Qwen3)

TAU-bench (airline): 62.0 vs 34.7 (Qwen3)

LongBench-v2: 61.5 vs 50.1 (Qwen3)

▪Hugging Face: https://huggingface.co/collections/MiniMaxAI/minimax-m1-68502ad9634ec0eeac8cf094

▪GitHub: https://github.com/MiniMax-AI/MiniMax-M1

▪Tech Report: https://github.com/MiniMax-AI/MiniMax-M1/blob/main/MiniMax_M1_tech_report.pdf

@ai_machinelearning_big_data

#llm #reasoningmodels #minimaxm1