group-telegram.com/gradientdude/338

Last Update:

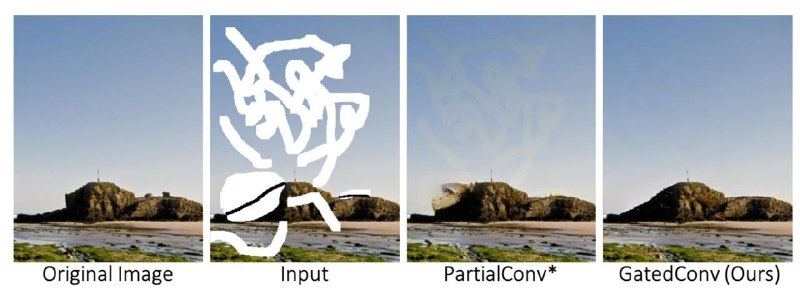

Image Inpainting: Partial Convolution vs Gated Convolution

Let’s talk about some essential components of the image inpainting networks - convolutions. #fundamentals

It is common in image inpainting model to feed a corrupted image (with some parts masked out) to the generator network. But we don’t want the network layers to rely on empty regions when features are computed. There is a straightforward solutions to this problem (Partial Convolutions) and a more elegant one (gated convolution).

🔻Partial Convolutions make the convolutions dependent only on valid pixels. They are like normal convolutions, but with hard mask multiplication applied to each output feature map. The first map is computed from occluded image directly or provided as an input from user. Masks for every next partial convolution are computed by finding non-zero elements in the input feature maps.

- Partial convolution heuristically classifies all spatial locations to be either valid or invalid. The mask in next layer will be set to ones no matter how many pixels are covered by the filter range in previous layer (for example, for a 3x3 conv, 1 valid pixel and 9 valid pixels are treated as same to update current mask).

- For partial convolution the invalid pixels will progressively disappear in deep layers, gradually converting all mask values to ones.

- partial convolution is incompatible with additional user inputs. However, we would like to be able to utilize extra user inputs for conditional generation (for example, sparse sketch inside the mask).

- All channels in each layer share the same mask, which limits the flexibility. Essentially, partial convolution can be viewed as un-learnable single-channel feature hard-gating.

🔻Gated convolutions. Instead of hard-gating mask updated with rules, gated convolutions learn soft mask automatically from data. It has a “Soft gating” block (consists of one convolutional layer) which takes an input feature map and predicts an appropriate soft mask which is applied to the output of the convolution.

- Can take any extra user guidance (e.g., mask, sketch) as input. They can be all concatenated with the corrupted image and fed to the first gated convolution.

- Learns a dynamic feature selection mechanism for each channel and each spatial

location.

- Interestingly, visualization of intermediate gating values show that it learns to select the feature not only according to background, mask, sketch, but also considering semantic segmentation in some channels.

- Even in deep layers, gated convolution learns to highlight the masked regions and sketch information in separate channels to better generate inpainting results.

@gradientdude

BY Gradient Dude

Share with your friend now:

group-telegram.com/gradientdude/338